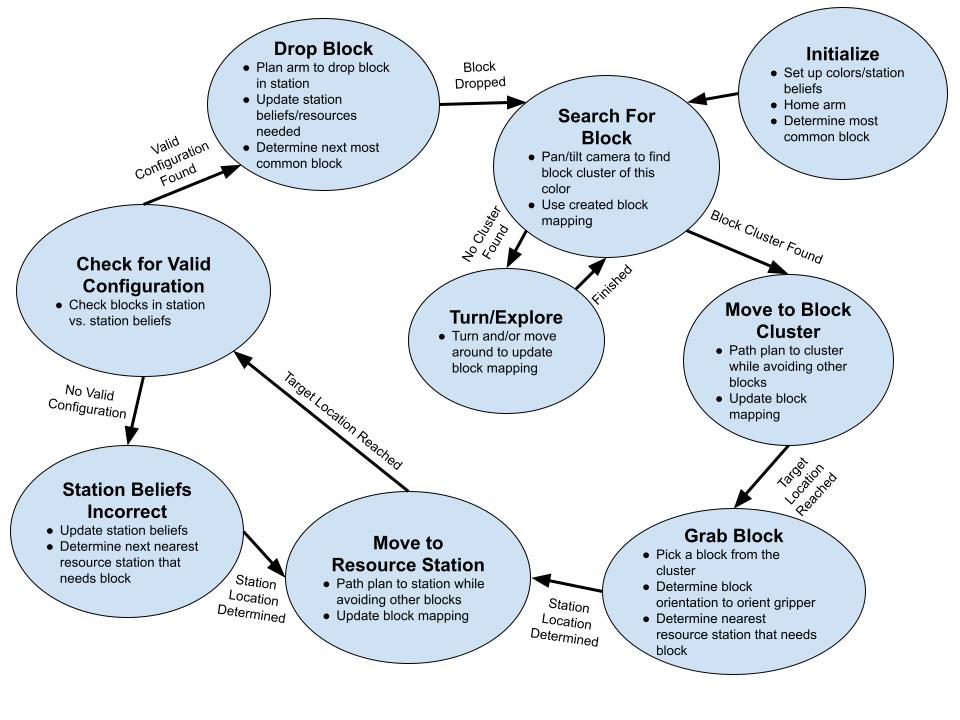

State Machine

The collaboration task is broken up into a series of sub-tasks that the robot must complete in sequence to achieve it's overall goal. These sub-tasks define the state the the robot is in, the expected actions and behavior of the robot within a state, and the required events for the robot to move into a different state. The figure below shows the state machine that defines these sub-tasks and how they are linked in order to complete the overall goal.

The robot starts in an initialization state before entering the task loop. In the initialization state, the robot will determine the colors it can manipulate, initialize it's set of station beliefs for the resource stations, home the arm, and determine what is the most common block color amongst the resource stations so it knows which block to search for first. Once these initialization tasks have been completed, the robot moves to the searching state.

In the searching state, the robot will move the camera around to find a cluster of blocks in the desired color. It will also update it's block mapping. If no cluster is found, then the robot will turn and explore to update the block mapping for a period of time. Once this finishes, the searching state continues. If the robot finds a block cluster, it transitions to the moving towards block state.

In this state, the robot will path plan to the cluster of blocks while avoiding the other blocks based on the map created. While moving, the block mapping gets continuously updated. Once the target location has been reached, the robot moves to the grabbing state.

In the grabbing state, the robot will pick a block from the cluster and orient the gripper to match the block orientation. It will then determine what the nearest resource station is that would need the given block. Once this has been determined, the robot transitions to the moving to resource station state.

In this state, similar to the other moving state, the robot will path plan to the station while avoiding the blocks based on the mapping. The block map will continue to get updated during the move. Once the resource station has been reached, the robot will check if the current state of blocks in the resource station matches its station beliefs. If it doesn't, the robot will update its station beliefs and determine what the next nearest resource station is that needs the block. It will then transition back to the moving to resource station state and repeat. Otherwise, if the configuration is valid, the robot will transition to the dropping state.

In the dropping state, the robot will plan the arm to drop the block in the station where another block is not currently located. It will then update its station beliefs as well as its understanding of the needed resources. From this it will determine what the next most common block is. Once this has been done, the robot will transition back to the searching state and the loop will continue until the allotted time runs out.

In the searching state, the robot will move the camera around to find a cluster of blocks in the desired color. It will also update it's block mapping. If no cluster is found, then the robot will turn and explore to update the block mapping for a period of time. Once this finishes, the searching state continues. If the robot finds a block cluster, it transitions to the moving towards block state.

In this state, the robot will path plan to the cluster of blocks while avoiding the other blocks based on the map created. While moving, the block mapping gets continuously updated. Once the target location has been reached, the robot moves to the grabbing state.

In the grabbing state, the robot will pick a block from the cluster and orient the gripper to match the block orientation. It will then determine what the nearest resource station is that would need the given block. Once this has been determined, the robot transitions to the moving to resource station state.

In this state, similar to the other moving state, the robot will path plan to the station while avoiding the blocks based on the mapping. The block map will continue to get updated during the move. Once the resource station has been reached, the robot will check if the current state of blocks in the resource station matches its station beliefs. If it doesn't, the robot will update its station beliefs and determine what the next nearest resource station is that needs the block. It will then transition back to the moving to resource station state and repeat. Otherwise, if the configuration is valid, the robot will transition to the dropping state.

In the dropping state, the robot will plan the arm to drop the block in the station where another block is not currently located. It will then update its station beliefs as well as its understanding of the needed resources. From this it will determine what the next most common block is. Once this has been done, the robot will transition back to the searching state and the loop will continue until the allotted time runs out.

Current Functionality

Due to roadblocks and challenges that we faced working on this project throughout this quarter, we adjusted the scope of what we hoped to complete. We focused on achieving what would be one loop of our state machine by moving the Locobot towards a block of our desired color, picking up the block, moving to a set resource station location, and dropping the block.

We modified a few of the given scripts in order to achieve our goal. The given scripts are linked here in the ME326 Collaborative Robotics Github workspace.

First, we used the given matching_ptcld_srv.cpp (image color filtering to 3D point example code) to locate our desired block color and get back the 3D point. From this, we modified the locobot_example_motion.py script (A to B example code) to subscribe to the 3D point topic and path plan to that location (leaving some distance, L, for the arm to later grab the block). We also modified the script so that we could use ROS topics and conditional statements to update our robot's state via our state machine. We used this same modified script to also path plan to a given resource station location. Finally, we modified the locobot_motion_example.py script (given arm planning and gripper controller script) to plan the motion of our arm down to the block for grasping. We modified the script, similarly, such that we could run our state machine in conjunction with the base movement script.

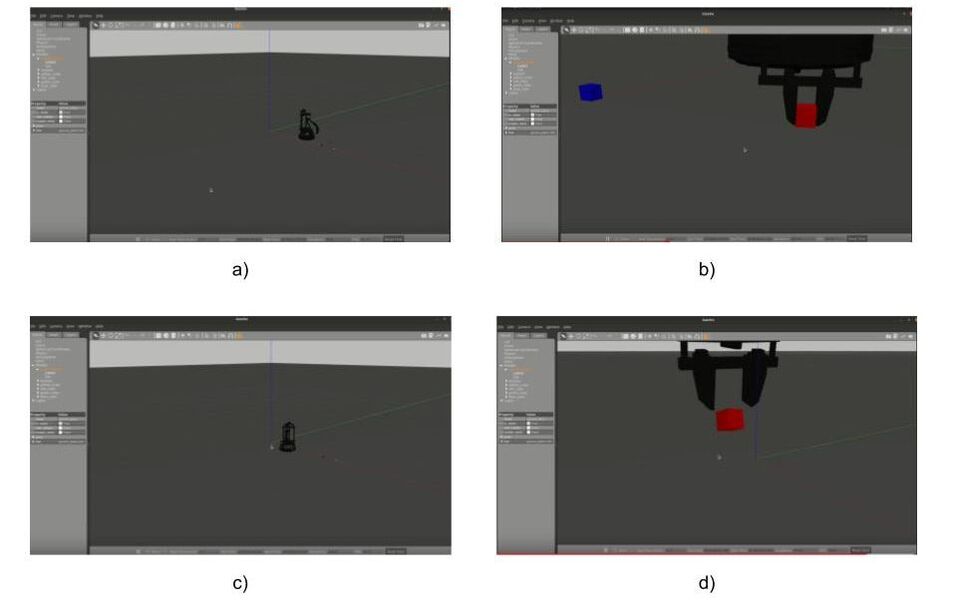

From this point, we focused a lot of effort on getting the code to run on the real robot with no luck (see sections Grasping and Manipulation and Controller for more details). However, we were able to adjust some of the friction parameters and fix the gripper inconsistencies to get this full loop running in simulation. The figure and video below shows the robot running through the grasping and dropping sequence in Gazebo simulation using ROS.

We modified a few of the given scripts in order to achieve our goal. The given scripts are linked here in the ME326 Collaborative Robotics Github workspace.

First, we used the given matching_ptcld_srv.cpp (image color filtering to 3D point example code) to locate our desired block color and get back the 3D point. From this, we modified the locobot_example_motion.py script (A to B example code) to subscribe to the 3D point topic and path plan to that location (leaving some distance, L, for the arm to later grab the block). We also modified the script so that we could use ROS topics and conditional statements to update our robot's state via our state machine. We used this same modified script to also path plan to a given resource station location. Finally, we modified the locobot_motion_example.py script (given arm planning and gripper controller script) to plan the motion of our arm down to the block for grasping. We modified the script, similarly, such that we could run our state machine in conjunction with the base movement script.

From this point, we focused a lot of effort on getting the code to run on the real robot with no luck (see sections Grasping and Manipulation and Controller for more details). However, we were able to adjust some of the friction parameters and fix the gripper inconsistencies to get this full loop running in simulation. The figure and video below shows the robot running through the grasping and dropping sequence in Gazebo simulation using ROS.